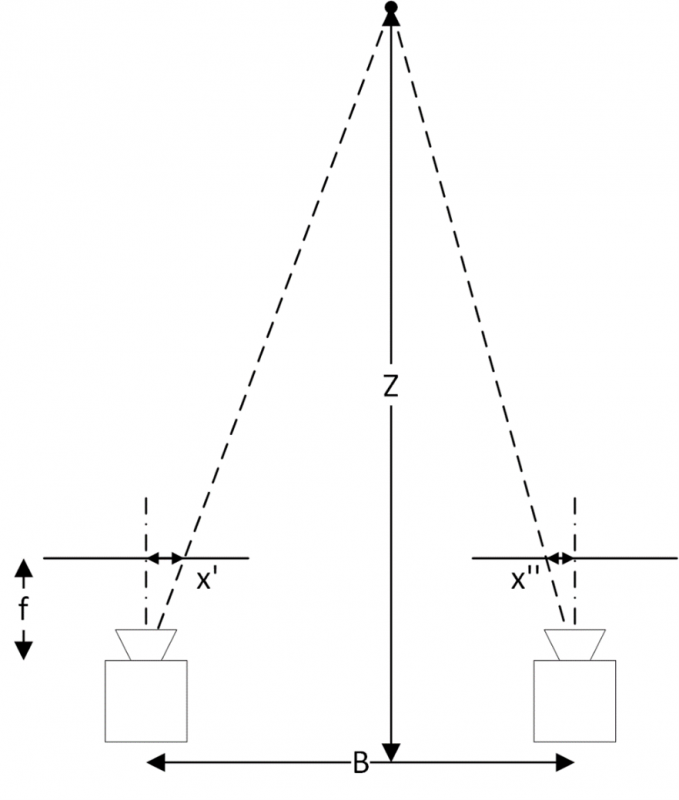

The result is the reconstruction of a 3-D model of the captured object featuring different characteristics in terms of accuracy and time needed to built it up. Both disciplines approach this issue, but with different procedures. Image sequence analysis for 3-D modeling is an aim of primary importance in Photogrammetry and Computer Vision. In addition, georeferencing accuracy was evaluated with many configuration of control points with loop and without loop closure imaging. The reprojection error and number of keypoints were evaluated for different base/length ratio. In this study historical Sultan Selim Mosque that was built in sixteen century by Great Architect Sinan was modelled via photogrammetric dense point cloud. The registration of point point cloud model into the georeference system is performed using control points. Thus, accuracy of the model should be increased with the control points or loop close imaging. In addition, sequential registration of the images from large scale historical buildings creates big cumulative registration error. Reprojection error on conjugate keypoints indicates accuracy of the model and keypoint localisation in this method. It is especially attractive method for cultural heritage documentation. Image based dense point cloud creation is easy and low-cost application for three dimensional digitization of small and large scale objects and surfaces. For example, the camera calibration approach of Tsai (1987) required not only the provision of an object point array with known XYZ coordinates, but also a multi-stage. Nevertheless, some developments in computer vision were curious given the then state of the art in photogrammetry. Metrically accurate results were not being sought. There was a preference to work with pixel coordinates and to ignore lens calibration. The need to assign object point coordinates and determine initial values was to be avoided. There was no desire whatever to get involved with manual point labelling correspondences were to be determined automatically, with a percentage of these accepted as being potentially erroneous. Why, one might ask, were the methods of photogrammetric orientation not adopted in computer vision? Of the no doubt many contributing factors, four come to mind: i. At the same time the computer vision community were engaged in popularising the essential matrix approach, albeit two images at a time. This approach was adopted in the 70s and 80s for industrial photogrammetry systems, and it remains in common use today. It had two main drawbacks – which were not viewed as such at the time – namely that initial XYZ coordinate values for at least 4 points were needed and a careful labelling of image points was required to ensure correct correspondences between images. This process suited the sequential, monoscopic measurement of images. Bundle adjustment then followed to refine the approximate values. From the new object point coordinates, further images were resected and further points intersected until initial values were established for all parameters. Spatial intersection would follow to provide the XYZ coordinates of object points ( X ). Thus, O was established for these images. This gave rise to a second orientation scenario, as follows: A number of object points (minimum of 4) were assigned preliminary XYZ coordinates (measured or arbitrary), and from these two or more images would be resected via closed-form resection (e.g. Given that the approach above was effectively limited to two- image stereo networks, and that stereo geometry is not optimal from a accuracy standpoint, an alternative was sought to accommodate the convergent multi-image geometry shown in Figs. 1 could therefore be oriented reliably, with certain constraints upon the degree of convergence between the two optical axes. 1 does not pose any practical difficulties. For AO, closed- form and quasi least-squares solutions for 3D similarity transformation are well known, and thus computing rigorous AO via a linear least-squares solution to Eqn. The initial values for translation were most often taken as zero.

Two rotations could be assigned an initial value of zero, and the relative rotation about the optical axis could be estimated from the image point distribution. Fortuitously, this was relatively straightforward for stereo geometry. Thus, in the present context, the first issue typically concerned how to determine initial values ∆. The absolute orientation (AO) was performed with a 3D similarity transformation (Eqn. 7) or, less frequently, the collinearity model (Eqn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed